Home » Services » Data & Analytics » Succeeding in ML Operations journeys

Succeeding in ML operations journeys

As machine learning becomes increasingly prevalent in the business world, more and more enterprises are considering the cloud as a way to scale their machine learning efforts.

The promise of AI and ML to deliver significant competitive advantages and contribute to the growth of the organization is now well established. Organizations, big and small, are adopting these to drive their strategic business goals.

However, moving machine learning workloads to cloud has its own challenges. Organizations are realizing that operational and support requirements increase rapidly as data science and modeling teams adopt emerging AI/ML platforms on cloud.

Machine Learning and Operations, or MLOps for short, is significantly different from traditional software development practices and requires a different way of thinking about how machine learning models are developed, deployed, and maintained. This shift can be difficult for various teams involved in governance, enablement, and support of public cloud-based capabilities that are used to more traditional approaches, and requires a change in culture and mindset.

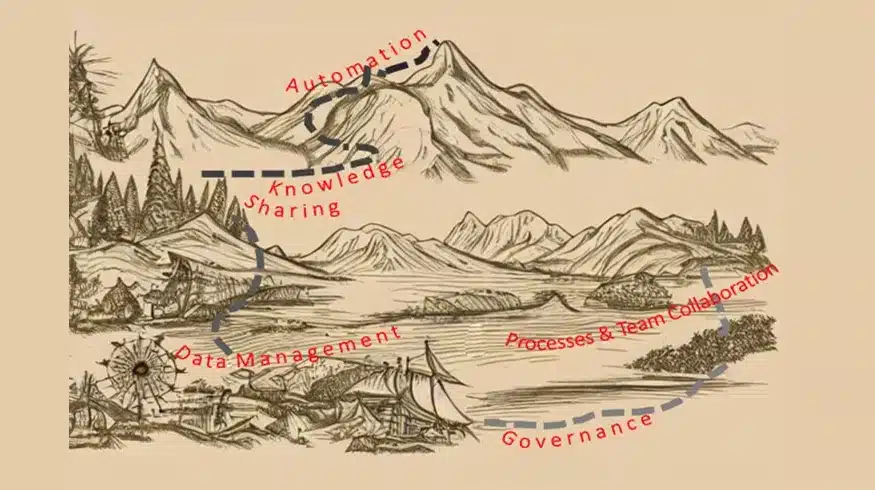

The power of AI/ML models and platforms and the potential for deriving significant value from them is accelerating very fast. It is increasingly becoming a critical imperative for firms to adopt these rapidly to ensure they remain competitive. However, successfully adopting these at scale would require the use of public cloud technologies. Public cloud adoption in many industries is still in the early stages, with many internal groups to work with and evolving processes and standards. By establishing clear ownership for MLOps & DataOps and simplifying adoption through the use of templates and automation, firms can overcome these challenges and scale AI/ML on cloud while ensuring overall agility and cost-effectiveness.

To learn more about the key challenges and our learnings and best practices, download the perspective paper.

Contact

Our experts can help you find the right solutions to meet your needs.

Get in touch